How Nation-States Use AI as a Cyber Weapon

Nation-states are actively weaponising AI for cyber attacks. Understand the threats, tactics, and how security teams must evolve to defend in 2026.

How Nation-States Use AI as a Cyber Weapon

The intelligence briefing every security professional needs in 2026

In 2023, Microsoft and OpenAI published a joint threat intelligence report that confirmed what the security community had been observing for months: nation-state hacking groups were actively using large language models to improve their offensive operations.

The groups named were not speculative actors. They were Fancy Bear, Kimsuky, Midnight Blizzard, Crimson Sandstorm, and Charcoal Typhoon — the established, attributed, long-running threat actors of Russia, North Korea, Iran, and China. Not emerging groups experimenting with new tools. The first tier of nation-state cyber capability.

This is not a future threat. It is the current operating environment. And most organisations are defending against it with frameworks, tools, and talent strategies built for a pre-AI threat landscape.

THREAT INTELLIGENCE NOTE

What follows is based on open-source threat intelligence, government advisories, security vendor research, and documented incident analysis. No classified material is referenced or implied. All sources are publicly available. The purpose is professional education, not operational intelligence disclosure. AI has not invented a new category of cyber attack. It has removed the human bottlenecks that previously limited how fast, how precisely, and at what scale nation-states could conduct the attacks they were already running. What Changed and When

Nation-state cyber operations have always been constrained by the same three factors that constrain any complex human endeavour: time, skill, and scale. Developing a convincing spear-phishing campaign targeting a specific individual at a specific organisation requires research. Writing custom malware that evades a specific organisation's defences requires expertise. Running thousands of simultaneous reconnaissance operations requires personnel.

AI compresses all three constraints simultaneously.

The inflection point was not a single event. It was a convergence. In 2022 and 2023, three things happened in close succession that changed the calculus: frontier language models became capable enough to write convincing human text and functional code; the cost of accessing those models dropped to near zero; and the open-source AI ecosystem produced tools that could be fine-tuned on offensive security datasets without any commercial platform involvement.

Nation-state actors, with the resources to move fast and the intent to weaponise every available capability, were among the first to operationalise what was now available.

The barrier that previously separated sophisticated nation-state capability from lower-tier actors was expertise. AI is dissolving that barrier. The tactics that once required years of tradecraft development can now be approximated by any actor with the motivation to learn the prompts.

The Six Ways AI Is Being Weaponised

Each of the following attack categories represents a documented capability that has moved from theoretical to operational. These are not predictions. They are current threat landscape realities.

01 | AI-Generated and AI-Mutated Malware | THREAT LEVEL: CRITICAL Traditional malware development requires skilled reverse engineers, months of testing, and significant operational security to avoid attribution. AI compresses this to days. More significantly, AI can generate malware variants continuously, each one different enough to evade signature-based detection whilst maintaining the same functional payload. The result is a threat that evolves faster than defenders can analyse. REAL OPERATION: Microsoft and OpenAI confirmed in early 2024 that Forest Blizzard (GRU-linked, Russia) used LLMs to research satellite and radar technologies relevant to military operations and to automate scripting tasks for offensive tooling. This was not experimentation. It was integration into operational workflow. |

02 | AI-Powered Spear Phishing at Industrial Scale | THREAT LEVEL: CRITICAL Spear phishing has always been the most effective initial access vector in nation-state operations. Its limitation was the human time required to research a target, craft a convincing communication, and personalise it sufficiently to defeat suspicion. AI eliminates that limitation entirely. A model trained on a target's public writings, social media, professional communications, and known relationships can generate a phishing message that their closest colleagues would struggle to identify as inauthentic. REAL OPERATION: Kimsuky (North Korea) has been documented using AI to generate highly personalised outreach to academic researchers, policy experts, and government officials. The messages reference real projects, real relationships, and real institutional contexts with an accuracy that previous social engineering operations could not achieve at scale. |

03 | AI-Accelerated Reconnaissance and Vulnerability Discovery | THREAT LEVEL: HIGH Before any exploitation, attackers map the target. This reconnaissance phase, identifying exposed systems, understanding network architecture, profiling personnel, and discovering vulnerabilities, was previously slow, noisy, and resource-intensive. AI makes it fast, quiet, and cheap. Automated agents can scan, correlate, and analyse attack surface data at a speed that compresses the window between initial access and meaningful exploitation. REAL OPERATION: Volt Typhoon (China), which has been pre-positioning inside US critical infrastructure networks for years, uses sophisticated reconnaissance techniques to identify and exploit living-off-the-land opportunities. AI-assisted analysis of network topology and user behaviour patterns accelerates this positioning phase significantly. |

04 | Adversarial Attacks on AI Defender Systems | THREAT LEVEL: HIGH As organisations deploy AI for threat detection, anomaly monitoring, and automated response, they create a new attack surface. Adversarial AI techniques allow attackers to craft inputs that deliberately confuse or evade AI-based security systems. A model trained to detect malicious network traffic can be defeated by inputs engineered to fall just outside its detection boundaries. The defender's AI becomes a known quantity that the attacker can probe and circumvent. REAL OPERATION: This is documented in academic threat research and is increasingly referenced in threat actor TTPs. The attack on AI-based email security systems, where adversarial perturbations are applied to phishing content to evade ML classifiers, is now a standard technique in advanced toolkits. |

05 | AI-Powered Influence Operations and Synthetic Media | THREAT LEVEL: HIGH Nation-state information warfare is not new. What is new is the scale, believability, and operational cost of synthetic media production. Deepfake video, AI-generated audio impersonating known individuals, synthetic news articles, and automated persona networks operating across social platforms can now be produced at a fraction of the cost and time they required two years ago. The line between what is real and what is generated is becoming genuinely difficult to locate. REAL OPERATION: Russia's information operations infrastructure has incorporated AI-generated content at scale. Multiple documented operations have used synthetic video and audio content to amplify narratives, discredit public figures, and create apparent evidence for events that did not occur. The authenticity problem is no longer theoretical. |

06 | Autonomous Cyber Agents: The Emerging Frontier | THREAT LEVEL: EMERGING-CRITICAL The most significant near-term development in nation-state AI cyber capability is autonomous attack agents: AI systems that can plan and execute multi-stage attack chains with minimal human direction. A human operator sets an objective. The agent determines the path, identifies the tools, executes the steps, and adapts when it encounters obstacles. This is not yet fully deployed at scale. But the research exists, the prototypes have been demonstrated, and the nation-states with the most sophisticated AI programmes are investing heavily. REAL OPERATION: Academic security research published in 2024 demonstrated that frontier AI models could autonomously exploit known web application vulnerabilities with high success rates when given access to basic tooling. The gap between research demonstration and operational deployment by well-resourced nation-state actors is measured in months, not years. |

The Asymmetry Problem

There is a structural problem at the heart of the AI cyber warfare landscape that no amount of defensive investment fully resolves.

Attackers operate with a fundamental advantage: they choose when, where, and how to attack. Defenders must protect everything, all the time, against an adversary they cannot fully see. AI amplifies this asymmetry.

Why AI helps attackers more than defenders

Asymmetric success criteria: Attackers need one success. Defenders need zero failures. AI allows attackers to generate and test thousands of approaches simultaneously until one works.

Defenders are predictable: The attacker knows the defender's AI tools are commercial and therefore analysable. They can probe defences with crafted inputs until they understand the detection boundaries.

Speed and accountability: Defenders must justify every investment in advance and demonstrate ROI. Attackers have no such constraint. Speed of capability deployment favours the attacker.

Proprietary training data: Nation-state actors can fine-tune AI models on classified datasets, proprietary malware libraries, and historical operation data that no commercial defender can access.

Bureaucratic asymmetry: Nation-state actors can deploy AI at the speed of a software update. Enterprise security programmes move at the speed of procurement, approval, and implementation cycles.

This is not an argument for despair. It is an argument for urgency. The professionals who understand this asymmetry are the ones who can make rational decisions about where to focus defensive investment to close the most critical gaps first.

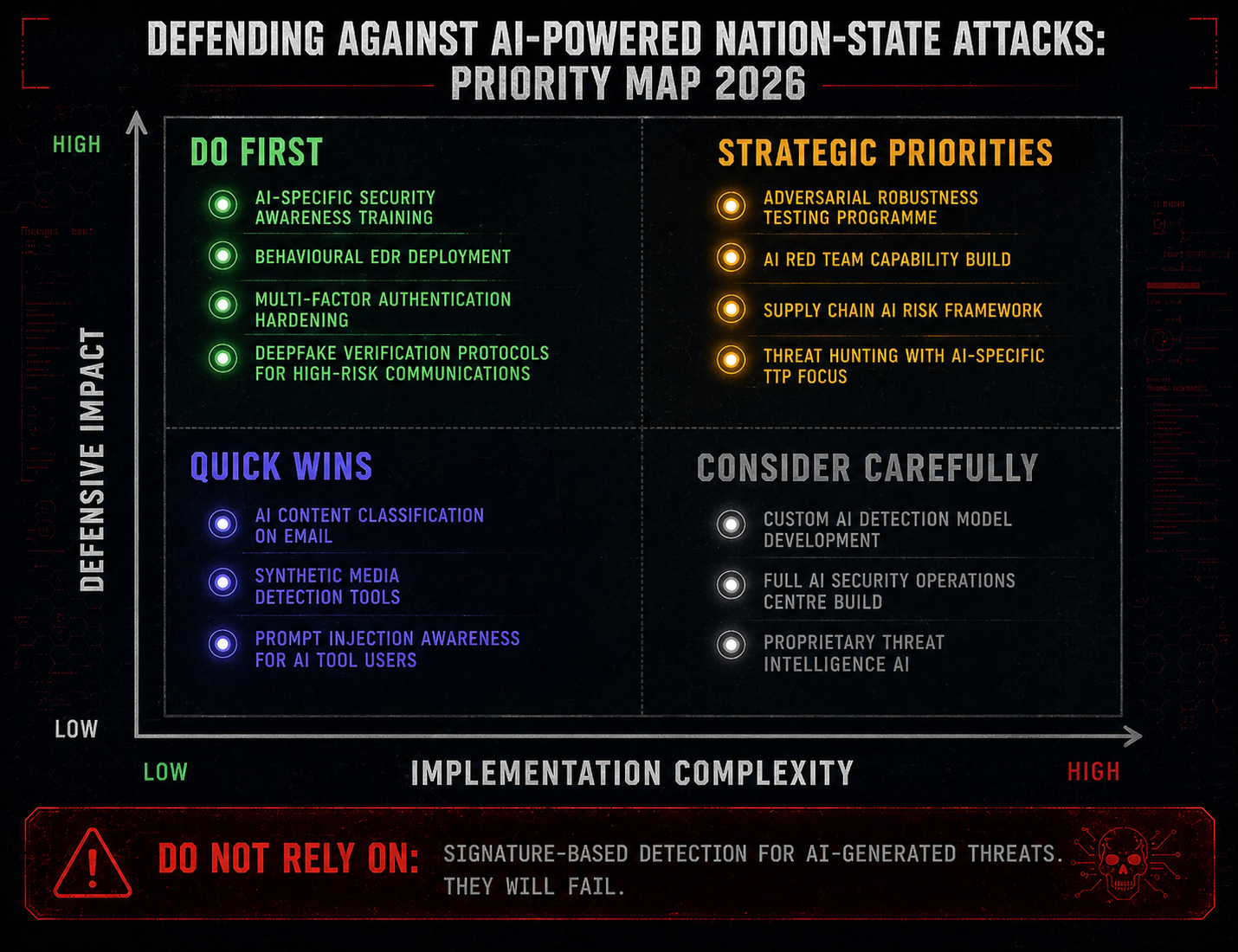

What Defenders Can Actually Do

The asymmetry is real. It is not an excuse for inaction. The organisations and professionals who are navigating this landscape most effectively share a consistent set of priorities.

Understand the threat before you select the tool

Most defensive AI purchasing decisions are made on the basis of vendor claims rather than a grounded understanding of what nation-state AI attack techniques actually look like. Before you evaluate any AI-powered security product, you need a practitioner-level understanding of what you are defending against. That means understanding how AI malware generation works, what AI-powered social engineering looks like at the point of delivery, and what adversarial inputs against ML systems actually do.

Elevate detection beyond signatures and rules

AI-generated malware variants are specifically optimised to evade signature-based detection. The defenders who are catching them are using behavioural detection: looking for what the malware does rather than what it looks like. This requires investment in EDR capability, proper threat hunting processes, and analysts who understand how AI-generated attack tooling behaves at a system level.

AI-specific controls for AI-powered threats

Deepfake detection in your identity verification and video communication channels

AI-generated content classifiers on inbound email and document flows

Red team exercises that explicitly include AI-augmented attack scenarios

Adversarial robustness testing for any AI-based security tools you deploy

Supply chain AI risk assessment: your vendors' AI use is part of your attack surface

The human layer is still the critical layer

Every AI-powered attack ultimately needs a human to take an action: click a link, open a document, approve a transaction, provide a credential. The most cost-effective defence against AI-powered social engineering is not technology. It is a workforce that understands the threat, has practised recognising it, and knows what to do when something feels wrong.

Security awareness training built for a pre-AI threat landscape is not sufficient. Specific, practitioner-delivered training on AI-powered phishing, synthetic media, and AI-enhanced impersonation is now a baseline requirement, not an advanced topic.

The professionals who will lead organisations through this environment are the ones who understand both the AI and the security dimensions. Not the technologist who does not understand threat intelligence. Not the threat analyst who does not understand how AI systems work. The intersection.

The Outlook

The nation-states at the frontier of AI cyber capability are not standing still. The models available to them in 2026 are more capable than those available in 2024. The ones available in 2028 will be more capable still. Every capability described in this article will be more developed, more automated, and more difficult to detect in twelve months than it is today.

This is not written to generate fear. Fear without direction is useless. It is written because the professionals who understand this threat at a technical and strategic level are the ones who can make organisations meaningfully more resilient against it. That capability is rare. Its value is increasing.

The question every security leader should be asking their team right now is not whether AI-powered attacks are real. They are documented, attributed, and active. The question is: is our defensive capability keeping pace with the threat we are actually facing? Not the threat from three years ago. The threat from this week.

The best intelligence is worthless if it does not change behaviour. The professionals who read this and act on it will be in a different position from the ones who read it and file it. The threat does not pause while we deliberate. Ready to go deeper?

Professional Training

Hands-on, mentor-led training aligned with industry certifications.

About the Author

Get weekly insights

Fresh articles on cybersecurity, AI, and leadership delivered to your inbox.