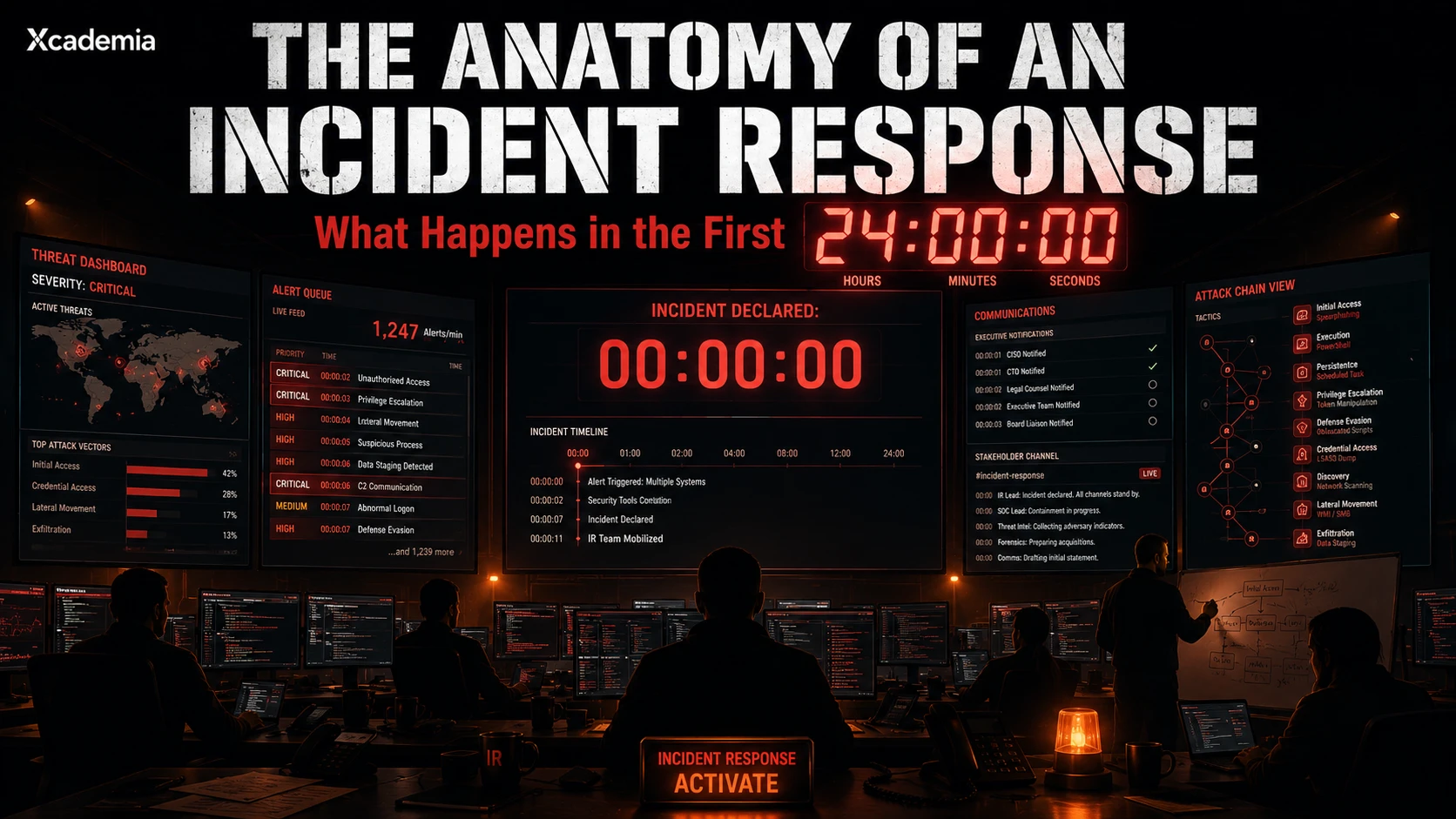

The Anatomy of an Incident Response

What actually happens in the first 24 hours of a cyber incident: detection, containment, escalation, eradication, recovery, and the high-pressure decisions that determine whether the attack is contained or becomes a full-scale organisational crisis.

What Actually Happens in the First 24 Hours

At 14:23 on a Tuesday afternoon, a security analyst in a London financial institution notices something unusual. An endpoint detection tool has flagged a process that should not be running on a finance department workstation. The alert is low severity. The analyst almost moves on.

They do not. They escalate. Four hours later, they are managing the largest incident their organisation has ever experienced: a ransomware operator who has been inside the network for eleven days, has compromised the Active Directory, and has deployed encryption payloads that are beginning to execute across the estate.

What happens in the next twenty hours determines whether this becomes a recoverable incident or a catastrophic one. What happens in the first two hours determines whether the response ever gets in front of the attack.

Incident response is not what most organisations practise. Most organisations have an incident response plan that describes what should happen. Very few have practised what actually happens when everything is moving at once, the information is incomplete, and every decision has consequences.

Before the Incident: What Determines Whether You Are Ready

The quality of an incident response is determined before the incident occurs. The decisions made in the first two hours of a real incident are only as good as the preparation that preceded them.

(A) The incident response plan

Every mature organisation has one. Many are last reviewed when they were written, sit in a SharePoint folder that most of the security team has not opened, and describe a response process that has never been tested. An incident response plan that has not been exercised under realistic conditions is a document, not a capability.

(B) The playbooks

Playbooks are the operational procedures that sit beneath the IR plan. Ransomware playbook. Data exfiltration playbook. Insider threat playbook. Business email compromise playbook. Each one describes the specific steps, decisions, and escalation thresholds for that incident type. The organisations that respond effectively to incidents are the ones where the analyst dealing with the first alert has a playbook that tells them exactly what to do next.

(C) Tabletop exercises

A tabletop exercise takes a fictional incident scenario and walks the response team through it in a facilitated discussion. No actual systems. No real pressure. But the decisions that would be made in reality are surfaced and examined: when do you notify the board? When do you call in external support? When do you notify regulators? When do you isolate rather than monitor? These decisions made badly in a tabletop cost nothing. Made badly in a live incident, they cost everything.

The organisations that handle incidents well are not the ones with the best technology. They are the ones that practised their response before they needed it and made the uncomfortable decisions in an exercise rather than under fire.

The Six Phases: What Each One Actually Involves

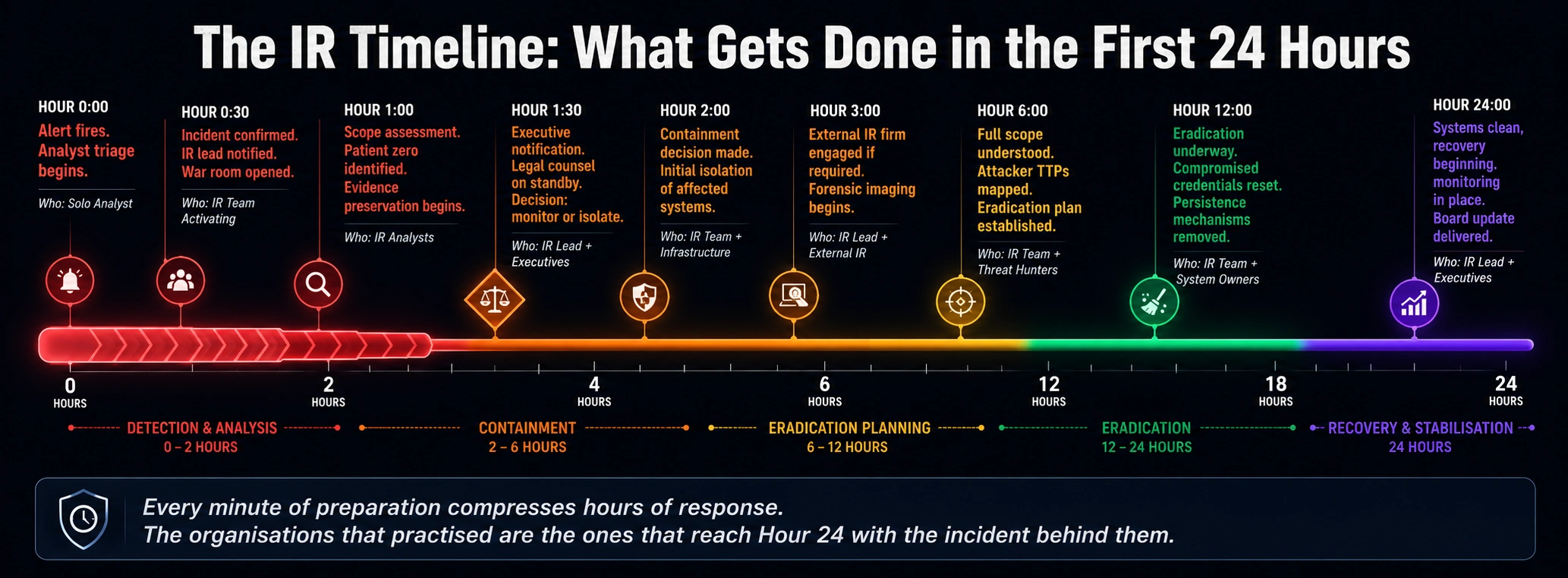

The standard incident response framework, used in various forms across NIST, SANS, ISO, and most enterprise IR programmes, describes six phases. What each phase looks like in practice under real conditions is substantially more chaotic than any framework diagram suggests.

PHASE 1: Preparation Before the incident |

|---|

Building the capability to respond before you need it. IR plan, playbooks, tool deployment, team training, tabletop exercises, retainer agreements with external IR firms. Most organisations under-invest here and over-invest in technology while leaving the human process underdeveloped. KEY ACTIONS: Complete IR plan. Validate playbooks. Run annual tabletop. Ensure IR tooling is deployed and working. Establish legal and communications retainers. |

PHASE 2: Detection and Analysis Hour 0 to Hour 2 |

|---|

The alert fires. The analyst makes the initial assessment. Is this real? What is the scope? The first two hours are the most critical and the most uncertain. Information is incomplete. The temptation to act before you understand is strong and usually wrong. The goal of this phase is to confirm the incident, establish initial scope, and mobilise the response team without alerting the attacker that they have been detected. KEY ACTIONS: Verify the alert is real. Identify patient zero. Preserve evidence before any containment action. Notify IR lead. Open incident war room. Begin timeline documentation. |

PHASE 3: Containment Hour 2 to Hour 6 |

|---|

Two types: short-term (stop the bleeding) and long-term (prevent recurrence). Short-term containment is the hardest decision in incident response because isolating systems destroys business operations and removes the attacker's access simultaneously. The question is always: do we isolate now and accept business impact, or do we monitor to gather intelligence and risk further spread? There is no universally correct answer. It depends on what you know and what you cannot afford. KEY ACTIONS: Isolate affected systems. Change compromised credentials. Block attacker C2 infrastructure. Preserve forensic images before cleaning. Document every containment action with timestamps. |

PHASE 4: Eradication Hour 6 to Hour 24 |

|---|

Removing the attacker from the environment completely. Not just closing the initial access vector but finding and eliminating every persistence mechanism, backdoor, and compromised credential the attacker established. This phase is where most organisations fail: they clean the obvious compromise and miss the secondary persistence that allows the attacker to return. Eradication requires a complete understanding of the attack timeline. KEY ACTIONS: Identify all persistence mechanisms. Remove malware and attacker tools. Patch or remediate the initial access vulnerability. Rebuild compromised systems from known clean state. Reset all potentially compromised credentials. |

PHASE 5: Recovery Hour 24 to Resolution |

|---|

Restoring systems and operations to normal function with confidence that the attacker is no longer present. Recovery is not just a technical process. It requires verification that the eradication was complete and monitoring to detect any signs of return. Many organisations rush recovery under business pressure and miss attacker persistence that was not fully eliminated. KEY ACTIONS: Restore from clean backups. Verify system integrity before returning to production. Monitor closely for signs of return. Communicate restoration status to stakeholders. Document recovery timeline. |

PHASE 6: Post-Incident Review Within 2 weeks of resolution |

|---|

The lessons learned process. What was the initial access vector? How long was the attacker present before detection? What slowed the response? What accelerated it? What needs to change in the IR plan, the playbooks, the tooling, or the team? The post-incident review is where the investment in IR capability pays forward. The organisations that do it seriously get better at every incident. The ones that skip it repeat the same mistakes. KEY ACTIONS: Root cause analysis. Detection gap assessment. Response timeline review. Stakeholder debrief. IR plan updates. Lessons learned documentation. Communication of outcomes to board. |

The Decisions That Make or Break the Response

Three decisions made in the first six hours of a significant incident determine the outcome more than any technical capability.

(A) Monitor or isolate

Isolating a compromised system removes the attacker's access and stops the spread. It also destroys the evidence of what the attacker was doing and may tip them off that they have been detected, causing them to accelerate their timeline. Monitoring preserves intelligence and evidence but risks further compromise. The right answer depends on the confidence level of your scope assessment: if you know the attacker's reach, you can isolate safely. If you do not, monitoring while you map may be worth the risk.

(B) When to notify

Regulatory notification requirements under GDPR, NIS2, DORA, and sector-specific frameworks have specific timelines. GDPR requires notification to the supervisory authority within 72 hours of becoming aware of a breach. NIS2 has a 24-hour early warning requirement. Getting the notification timing wrong, either too early before you have facts or too late after you have exceeded the deadline, carries regulatory consequences. Legal counsel should be engaged in the first hour of any significant incident.

(C) When to call external help

Most internal IR teams are well-equipped for incidents up to a certain complexity threshold. Ransomware with full estate encryption, nation-state-level intrusion, or simultaneous compromises across multiple business units are typically beyond what internal teams can handle alone without extending the response timeline significantly. The decision to engage an external IR firm should be made early rather than late. Most retainer agreements allow for engagement before you are certain you need it.

The incident response decisions that go wrong are almost never technical errors. They are communication failures, escalation failures, and scope assessment failures. The most technically skilled IR team in the world will make the wrong call if they do not have the right information at the right time.

What Good IR Looks Like After the Fact

The post-incident review is where organisations either learn and improve or repeat the same mistakes. The reviews that produce genuine capability improvement share common characteristics.

Timeline reconstruction: a complete, minute-by-minute account of what the attacker did, when they did it, and when it was detected. This requires log data that many organisations either do not retain or cannot query at the right fidelity.

Detection gap analysis: the specific moment the attacker entered versus the specific moment they were detected. The gap between these two events is the primary metric of detection capability. Understanding why the gap existed drives the capability investment.

Response timeline analysis: where the response was delayed, what caused the delay, and what process or capability change would have compressed it.

Honest escalation review: were the right people notified at the right time? Were decisions made at the right level with the right information? Most IR response failures involve decisions made too slowly because escalation was unclear.

The organisation that does a thorough post-incident review after every significant incident is a different organisation after five years than the one that resolves incidents and moves on. Continuous improvement in IR capability is not a theory. It is compounding.

Ready to go deeper?

Professional Training

Hands-on, mentor-led training aligned with industry certifications.

About the Author

Sharper every day

Daily tutorials, analysis, and career playbooks across all 12 Xcademia disciplines, straight to your inbox. No spam.