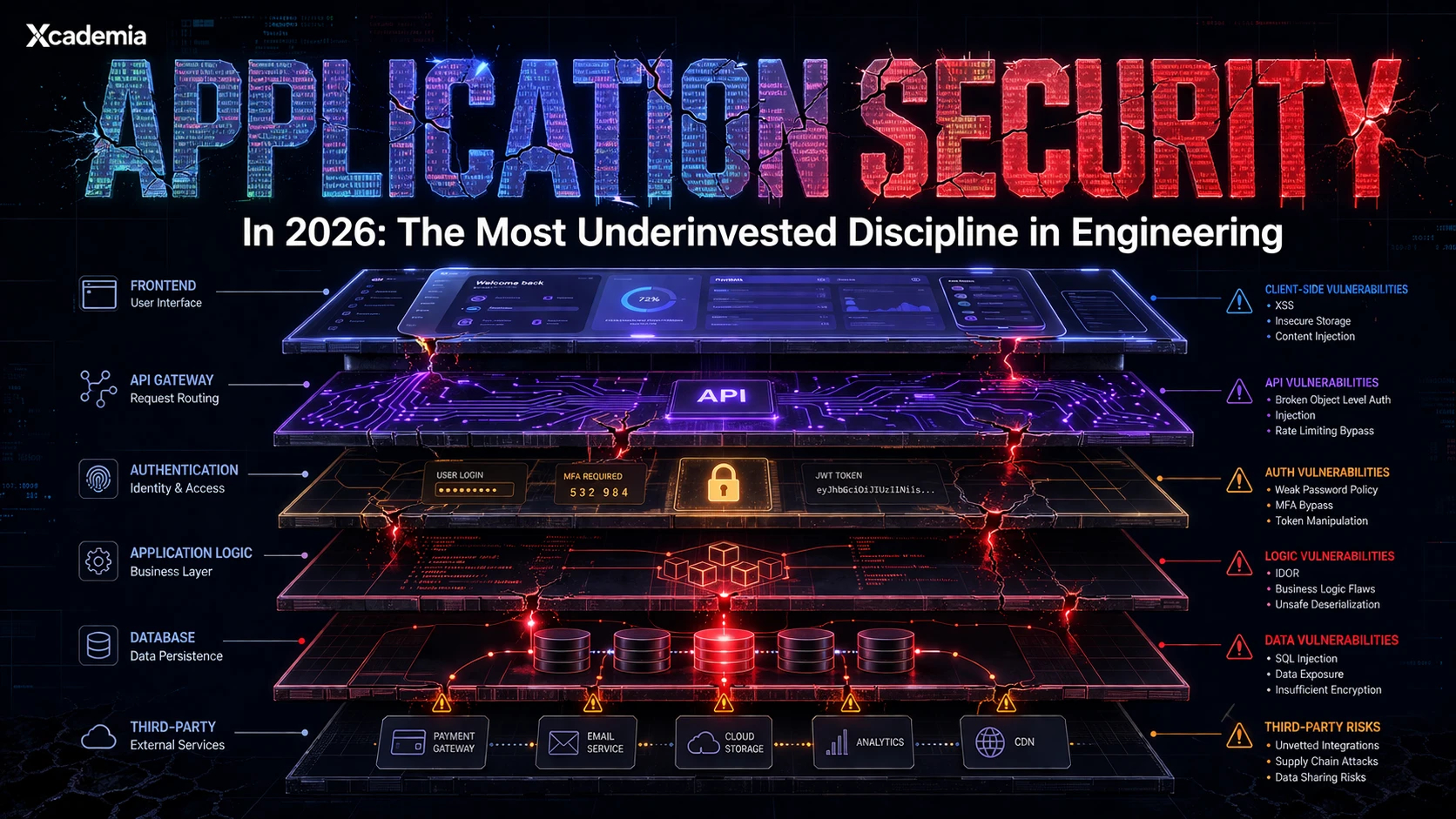

Application Security in 2026: Why It Is the Most Underinvested Discipline in Engineering

A single vulnerable library once exposed billions of systems. In 2026, application security is every organisation’s real attack surface, yet still underfunded. This article explains why AppSec gaps persist, what the OWASP Top 10 reveals, and how to build secure software before breaches happen.

Application Security in 2026:

Why It Is the Most Underinvested Discipline in Engineering

In 2021, a vulnerability in the Apache Log4j logging library put an estimated three billion devices at risk. The flaw was trivial to exploit. A single malicious string sent in an HTTP request could force any affected system to download and execute arbitrary code from an attacker-controlled server. Governments issued emergency alerts. Security teams worked through weekends. Incident response firms were overwhelmed.

Log4Shell was not an attack on a network perimeter. It was not phishing. It was not a sophisticated nation-state operation. It was a flaw in a piece of open-source software that developers had been copying into their applications for years without examining what it actually did or whether it was secure.

Application security is not a specialist concern for software companies. It is the attack surface that every organisation now carries, whether it builds its own software or depends on software others built. In 2026, that surface is larger, more complex, and more poorly understood than at any point in the history of computing.

The perimeter is gone. The application is the new frontier. And most engineering teams are building on it without the skills, the processes, or the tooling to understand what they are exposing.

Why Application Security Is Consistently Underinvested

The security budget conversation in most organisations still defaults to the same categories: endpoint protection, network security, identity management, SOC operations. Application security rarely sits at the same table, and when it does it is usually in the form of penetration testing scheduled once a year and forgotten the other fifty-one weeks.

Three structural reasons explain this consistently.

Security was not part of how software was built

The discipline of software engineering developed its practices, methodologies, and professional culture largely before security was treated as an engineering concern. Agile, DevOps, continuous delivery, microservices: these movements transformed how software is built and deployed. None of them had security as a core design principle. Security was added later, usually as a gate at the end of the development process, by a separate team, often too late to change anything fundamental.

The skills gap is acute

Application security requires a combination of skills that is genuinely rare: deep software development knowledge, an attacker mindset, an understanding of security principles at the code level, and the ability to communicate findings to developers in a way that produces actual fixes. People with all of these capabilities are in short supply. Most security teams have professionals who understand threats but not code. Most development teams have professionals who understand code but not threats. The intersection is where AppSec engineers live, and there are not enough of them.

Speed works against security

Modern software development moves fast. Continuous integration and deployment pipelines ship code multiple times per day in mature organisations. Security processes designed for quarterly release cycles do not fit into pipelines that deploy hourly. The choice organisations face, often implicitly rather than explicitly, is between speed and security. Speed usually wins because the consequences of insecurity are distant and probabilistic, while the consequences of delay are immediate and visible.

The choice between speed and security is a false choice if you build security into the development process from the start. It only feels like a trade-off if you are trying to add it at the end.

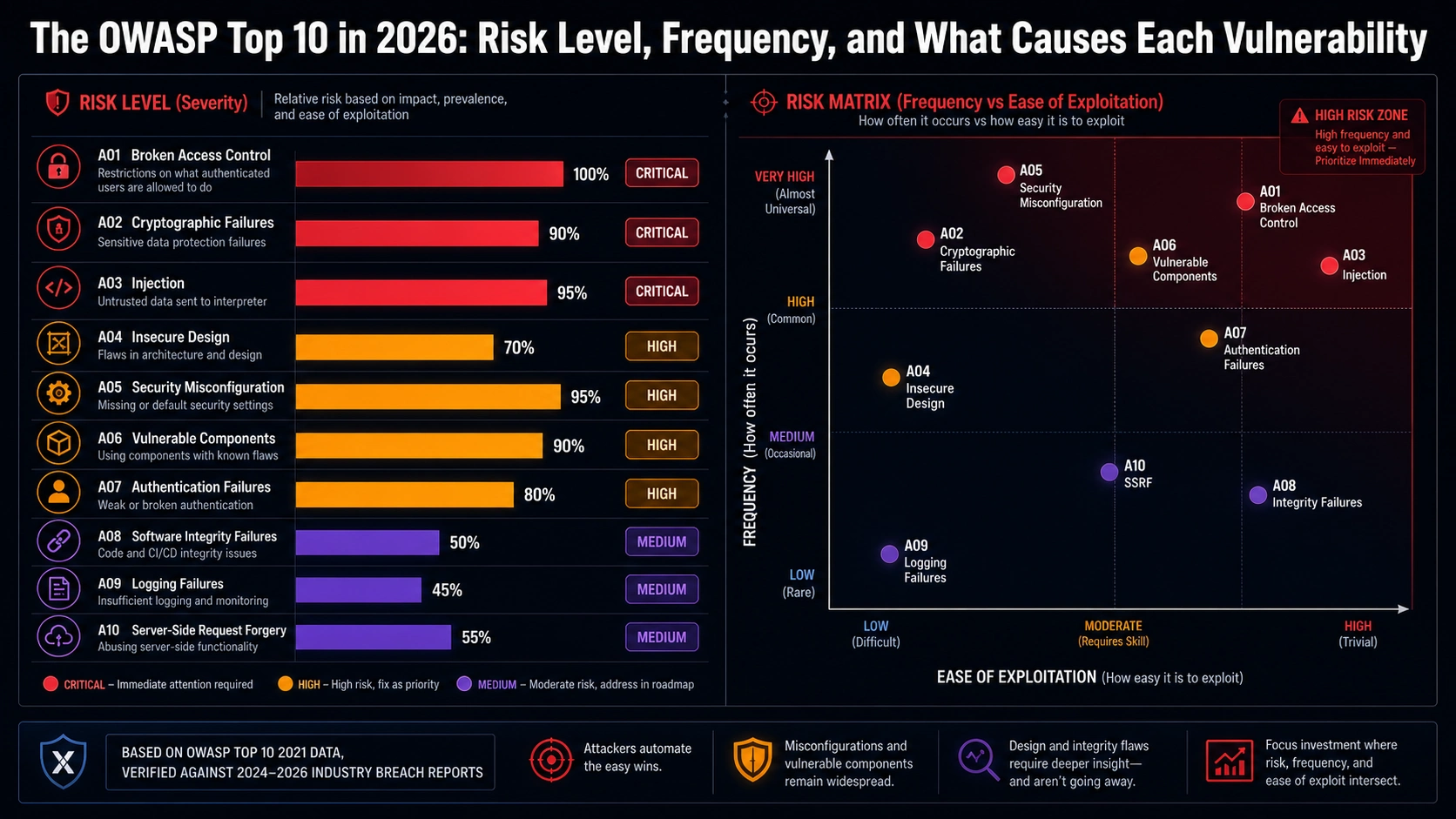

The OWASP Top 10: The Vulnerability List Every Developer Should Know

The Open Web Application Security Project publishes a list of the ten most critical security risks to web applications, updated periodically based on real-world vulnerability data. The OWASP Top 10 is not a checklist. It is a diagnostic framework. Understanding it is the starting point for understanding where most application security failures actually occur.

ID | VULNERABILITY | WHAT IT MEANS IN PRACTICE |

|---|---|---|

A01 | Broken Access Control | Users accessing data or functions they should not be able to reach. |

A02 | Cryptographic Failures | Sensitive data exposed due to weak or absent encryption. |

A03 | Injection | Attacker-controlled data sent to an interpreter. SQL injection is the classic example. |

A04 | Insecure Design | Security not considered during architecture. No control can fix a fundamentally flawed design. |

A05 | Security Misconfiguration | Default settings, open cloud storage, unnecessary features left enabled. |

A06 | Vulnerable and Outdated Components | Third-party libraries and frameworks carrying known vulnerabilities. |

A07 | Identification and Authentication Failures | Weak credential management, missing MFA, session handling errors. |

A08 | Software and Data Integrity Failures | Assuming updates and pipelines are trustworthy without verification. CI/CD attacks. |

A09 | Security Logging and Monitoring Failures | Not capturing or acting on evidence of attack. The breach that was never noticed. |

A10 | Server-Side Request Forgery | Application fetching a remote resource based on attacker-supplied URL. |

Three observations about this list are worth making explicitly.

First, none of these vulnerabilities require sophisticated attack capability. Every one of them can be exploited by an attacker with moderate technical knowledge and publicly available tools. The barrier to exploitation is not high.

Second, most of them are preventable through known, documented engineering practices. Parameterised queries prevent injection. Proper access control models prevent broken access control. Keeping dependencies updated prevents vulnerable component exploitation. These are not exotic mitigations. They are engineering fundamentals.

Third, these vulnerabilities appear in the OWASP Top 10 year after year because organisations consistently fail to address them. They are not novel problems. They are persistent ones.

The OWASP Top 10 is not a list of sophisticated threats. It is a list of engineering failures that recur because the discipline of building secure software is not yet standard practice.

What Secure Software Development Actually Requires

Building secure software is not a matter of running a security scan at the end of the development cycle and fixing the high-severity findings. That approach misses the design flaws that scans cannot detect, produces findings too late in the process to address without significant rework, and creates a compliance theatre dynamic where teams optimise for passing the scan rather than producing secure software.

Genuine application security capability operates across the entire development lifecycle.

Threat modelling during design

Before a line of code is written, the security implications of architectural decisions should be examined. Threat modelling is the practice of systematically identifying what can go wrong, who might cause it, and how the design can be modified to reduce or eliminate the risk. STRIDE (Spoofing, Tampering, Repudiation, Information disclosure, Denial of service, Elevation of privilege) is the most widely used framework. Organisations that embed threat modelling into their design process catch the expensive problems before they are built in.

Secure coding practices

Developers need to understand the vulnerability classes they are most likely to introduce. Input validation. Output encoding. Parameterised queries. Proper error handling that does not leak information. Secure defaults. These are not advanced topics. They are the baseline that every developer writing code that touches the internet should understand. Most do not, because their training did not cover it and their employers have not invested in changing that.

Automated security testing in the pipeline

Static Application Security Testing (SAST) analyses source code for vulnerability patterns without executing it. Dynamic Application Security Testing (DAST) tests running applications by sending attack payloads and observing responses. Software Composition Analysis (SCA) identifies known vulnerabilities in third-party dependencies. None of these tools is sufficient on its own. All of them, integrated into the CI/CD pipeline, dramatically improve the security of what ships.

Manual security review and penetration testing

Automated tools find what they are built to find. They miss logic flaws, business rule violations, chained vulnerabilities, and the creative attack paths that a skilled human tester identifies. Regular penetration testing by qualified professionals remains essential, not as a compliance exercise but as a genuine test of what automated tooling missed.

Security scanning finds the obvious. Threat modelling finds the dangerous. The organisations that rely only on scanning are protecting against the attacks that require the least skill to conduct.

The Shift Left Imperative

Shift left is a phrase that has become so common in security discussions that it has lost some of its force. The underlying principle is still correct and still urgently necessary.

The cost of fixing a security vulnerability rises dramatically as it moves through the development lifecycle. A design flaw caught during architecture review might take hours to address. The same flaw caught in a penetration test after deployment might require weeks of rework, re-testing, and redeployment. The same flaw discovered after exploitation might involve breach notification, regulatory investigation, legal liability, and reputational damage that no rework budget can address.

Shifting security left means moving it earlier in the process: into design, into development, into the daily work of every engineer rather than into a gate at the end that most things pass through unchecked. It means AppSec engineers working alongside development teams rather than reviewing their output after the fact. It means developers understanding security well enough to make better decisions before the code is written.

Every hour spent on security during design saves ten hours in remediation after deployment. That is not a philosophy. It is the consistent finding of every organisation that has measured the cost of security debt.

The AppSec Engineer: The Role Engineering Teams Need Most

The Application Security Engineer is the professional who sits at the intersection of development and security. They understand code deeply enough to read it, review it, and write it. They understand attacks deeply enough to identify where vulnerabilities will be introduced and how they will be exploited. They understand processes deeply enough to change how engineering teams work, not just what they produce.

This role is in acute demand and short supply across every sector that builds or depends on software. The financial services firms building trading platforms and customer-facing applications. The healthcare organisations building patient portals and clinical tools. The government departments digitising public services. The technology companies building products at scale.

What separates an effective AppSec engineer from someone who has learned AppSec theory is practical experience with real codebases, real vulnerabilities, and real development teams. The classroom version of this role is insufficient. The work requires people who have found vulnerabilities in real applications, explained them to sceptical developers, and seen the fixes ship.

The AppSec engineer is not a gatekeeper. They are a capability multiplier: the person who makes every developer on the team better at building secure software than they would be without them.

What the 2026 AppSec Landscape Looks Like

Three developments are reshaping application security in 2026 specifically.

AI-generated code at scale

The adoption of AI coding assistants has accelerated significantly. Developers are generating code, completing functions, and building features with AI tools at a speed that manual code review cannot match. The security implications are significant: AI-generated code can contain the same vulnerability classes as human-written code, and it can introduce them at a speed that outpaces existing security processes. Organisations that have not updated their AppSec practices to account for AI-generated code are running a significant unmitigated risk.

Supply chain attacks

The Log4Shell incident was an early high-profile example of a category of attack that has grown substantially: the compromise of a dependency or component that legitimate applications then import. The SolarWinds operation used the same principle at the software build level. As organisations depend on increasingly complex webs of open-source and commercial software components, the attack surface of the supply chain becomes as significant as the attack surface of the application itself.

API proliferation

Modern application architectures are built around APIs. Mobile applications, web frontends, third-party integrations, and internal microservices all communicate through APIs. API security has its own vulnerability taxonomy, its own testing approaches, and its own failure modes. The OWASP API Security Top 10 is a separate list from the web application Top 10, and many organisations have not yet built the capability to address it.

The application security challenges of 2026 are fundamentally different from those of 2019. AI-generated code, supply chain attacks, and API proliferation require updated skills, updated processes, and updated tooling. The teams still running on 2019 practices are not just behind. They are exposed.

The Bottom Line

Every organisation that builds software or depends on software, which in 2026 means every organisation of any meaningful size, carries an application security attack surface. The question is not whether that attack surface exists. The question is whether the capability exists to understand it, manage it, and reduce it to an acceptable level.

The Log4Shell lesson has not been fully learned. The supply chain lesson from SolarWinds has not been fully learned. The API security lesson is still being absorbed. The organisations that will be in the best position when the next significant application vulnerability emerges are the ones that have built AppSec capability into their engineering practice before they need it.

Application security is not a product you buy. It is a capability you build. The organisations that treat it as the former will keep discovering that the product was insufficient. The organisations that treat it as the latter will be significantly more resilient.

Ready to go deeper?

Professional Training

Hands-on, mentor-led training aligned with industry certifications.

About the Author

Sharper every day

Daily tutorials, analysis, and career playbooks across all 12 Xcademia disciplines, straight to your inbox. No spam.