The EU AI Act Is Now Law

The EU AI Act is now binding law with penalties reaching 7% of global turnover. This guide explains who it applies to, the four risk tiers, key 2026 deadlines, and what organisations must do to achieve High-Risk AI compliance.

What Every Organisation Must Do in 2026

The EU Artificial Intelligence Act entered into force on 1 August 2024. It is the world's first comprehensive legal framework for artificial intelligence. Its reach extends well beyond the European Union: any organisation providing AI systems to EU market users, or deploying AI systems that affect people in the EU, is subject to its requirements regardless of where that organisation is headquartered.

For UK organisations that retained EU market access post-Brexit, for multinational corporations with EU operations, and for AI developers building products used by EU citizens, the Act creates real legal obligations with real enforcement consequences. Understanding what those obligations are, and what the implementation timeline requires, is no longer optional.

This article explains what the EU AI Act actually requires, which organisations it applies to, and what must be done to achieve compliance in 2026.

The EU AI Act is not a set of guidelines. It is binding law with enforcement teeth. Maximum penalties reach 3% to 7% of global annual turnover. For large technology organisations, those numbers run into hundreds of millions of euros. The compliance conversation that was theoretical twelve months ago is now operational.

The Scope: Who It Applies To

The EU AI Act applies to a broad range of organisations and individuals.

Providers

Any organisation or individual that develops an AI system or a general-purpose AI model and places it on the EU market or puts it into service in the EU. This includes organisations headquartered outside the EU if their systems are used within it. A US-headquartered AI company whose product is used by EU businesses or consumers is a provider under the Act.

Deployers

Organisations that use an AI system in a professional context, where the output of that system affects people within the EU. Using an AI hiring tool to screen candidates in Germany, an AI diagnostic tool in a French hospital, or an AI credit scoring system for Italian consumers all constitute deployment under the Act.

Importers and distributors

Organisations that import AI systems developed outside the EU into the EU market, or that distribute AI systems within the EU, carry their own obligations under the Act.

The extraterritorial reach

The Act explicitly applies to AI systems whose output is used within the EU, regardless of where the provider is established. This extraterritorial application mirrors the GDPR model and creates compliance obligations for non-EU organisations that are not always immediately obvious from reading the headline description of the legislation.

If your AI system touches EU citizens or EU market users in any professional context, you are likely within scope. The question is not whether the Act applies to you. It is which obligations apply and when they take effect.

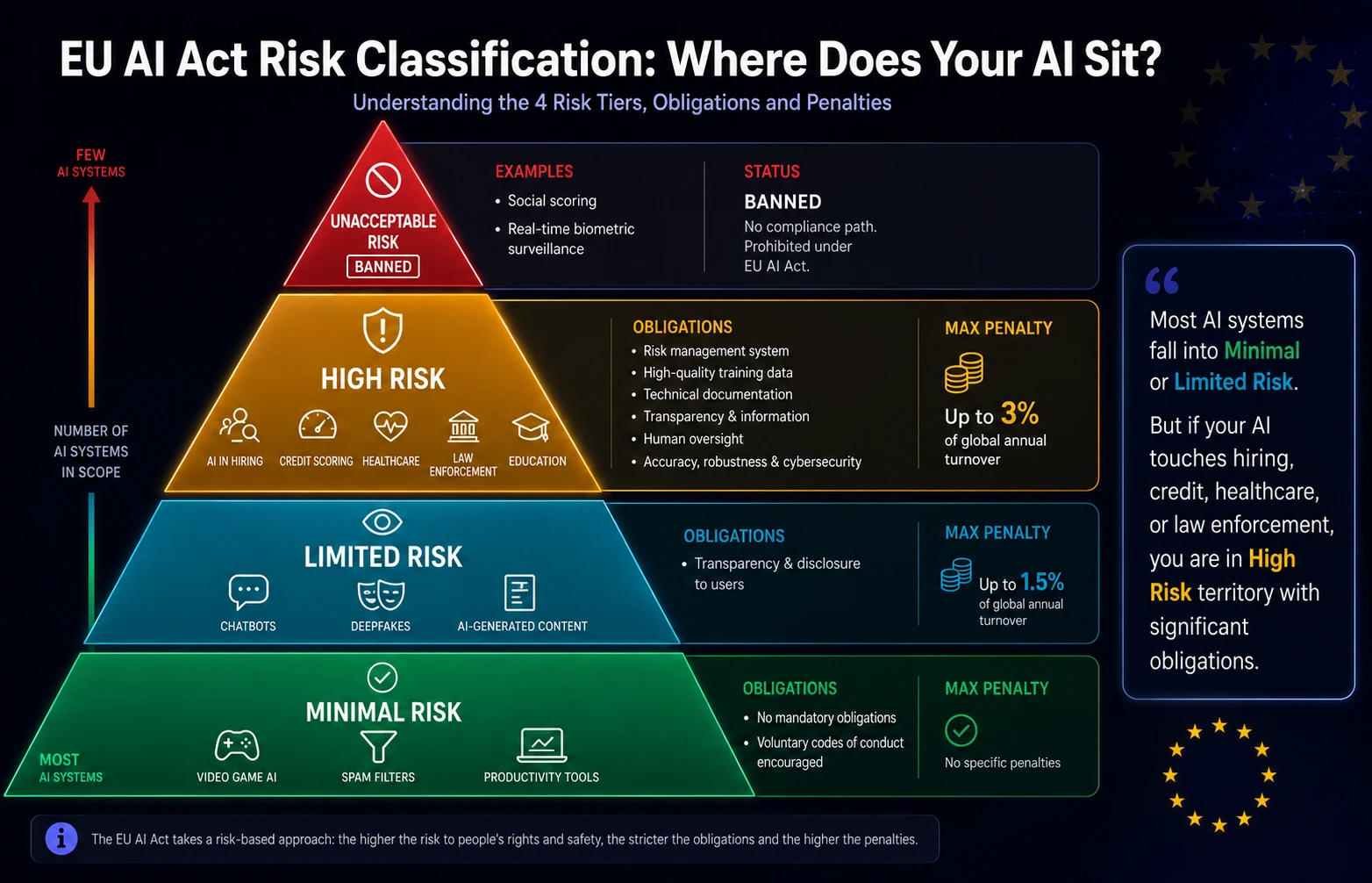

The Four-Tier Risk Classification

The Act classifies AI systems into four risk tiers. The tier determines the compliance obligations. Understanding the classification is the starting point for every organisation's AI Act compliance programme.

TIER 1: Unacceptable Risk - Prohibited |

|---|

EXAMPLES: Social scoring by public authorities. Real-time biometric surveillance in public spaces (with narrow exceptions). AI systems that exploit psychological vulnerabilities. Predictive policing based solely on profiling. OBLIGATIONS: These AI systems are banned outright in the EU. No compliance path exists. If your organisation operates any of these systems, they must be discontinued for EU use. MAXIMUM PENALTY: Criminal and civil liability. Systems must be withdrawn. |

TIER 2: High Risk - Stringent Obligations |

|---|

EXAMPLES: AI in critical infrastructure (energy, water, transport). AI in education (assessing students). AI in employment (recruiting, performance management). AI in essential services (credit scoring, insurance). AI in law enforcement. AI in migration and border control. AI in justice administration. OBLIGATIONS: Mandatory risk management system. High-quality training data requirements. Technical documentation and record-keeping. Transparency and provision of information to users. Human oversight mechanisms. Accuracy, robustness, and cybersecurity standards. Registration in EU database. MAXIMUM PENALTY: Up to 3% of global annual turnover or EUR 15 million (whichever is higher). |

TIER 3: Limited Risk - Transparency Obligations |

|---|

EXAMPLES: AI systems that interact with humans (chatbots). AI systems that generate synthetic content (deepfakes, AI-generated text, images). Emotion recognition systems. OBLIGATIONS: Obligation to inform users they are interacting with an AI. Disclosure when content is AI-generated. Users must be able to distinguish AI from human interaction. MAXIMUM PENALTY: Up to 1.5% of global annual turnover or EUR 7.5 million. |

TIER 4: Minimal Risk - No Obligations |

|---|

EXAMPLES: AI-enabled video games. Spam filters. AI-powered search engines. Most standard business productivity AI tools. OBLIGATIONS: No mandatory obligations under the Act, though voluntary codes of conduct are encouraged. MAXIMUM PENALTY: N/A for this tier. |

The Implementation Timeline: What Is Required When

The EU AI Act has a phased implementation schedule. Not all obligations are active immediately.

February 2025: Prohibited AI systems banned

Unacceptable risk systems must already be prohibited in the EU. This deadline has passed. Any organisation still operating Tier 1 systems for EU users is already in violation.

August 2025: GPAI model obligations

Obligations for providers of general-purpose AI models (such as large language models) came into force in August 2025. Providers of foundation models must provide technical documentation, comply with copyright law, and publish summaries of training data used. Providers of models that pose systemic risk face additional obligations including adversarial testing and incident reporting.

August 2026: High-risk AI system obligations

The full High-Risk AI system requirements apply from August 2026. This is the most significant deadline for most organisations. Any organisation deploying AI in the categories listed under High Risk must have their risk management, documentation, human oversight, and registration requirements in place by this date.

August 2027: High-risk AI in regulated products

AI systems embedded in products already regulated under existing EU law (medical devices, machinery, vehicles) have an extended deadline of August 2027 for full compliance.

August 2026 is the critical deadline for most organisations. High-risk AI applications in hiring, credit scoring, healthcare decision-support, and law enforcement must be compliant. Organisations that have not begun their compliance programme are running out of time.

What High-Risk Compliance Actually Requires

For the majority of commercial organisations the most significant and complex obligations are in the High-Risk tier. Understanding what compliance actually requires in operational terms is the starting point for building a compliance programme.

Risk management system

A documented risk management system covering the full lifecycle of the AI system: design, development, testing, deployment, monitoring, and decommissioning. The system must identify risks to health, safety, and fundamental rights, implement appropriate mitigation measures, and be reviewed and updated continuously.

Data governance

Training, validation, and testing data must meet defined quality standards. Data must be relevant, representative, free from errors to the extent practicable, and sufficiently complete for the intended purpose. Data governance documentation must be maintained throughout the system's lifecycle.

Technical documentation

Comprehensive technical documentation must exist before a High-Risk AI system is placed on the market or put into service. This includes a description of the system and its purpose, the design and development methodology, validation procedures, performance metrics, and the assumptions and limitations of the system.

Human oversight

High-Risk AI systems must be designed to allow effective human oversight. Users must be able to understand the system's outputs, monitor its operation, override or interrupt it when necessary, and avoid automation bias. The design of the human oversight mechanism must be documented and validated.

Transparency and information provision

Deployers of High-Risk AI systems must provide users with adequate information about the system's capabilities, limitations, and intended use. The information must be accessible, understandable, and sufficient to allow appropriate use.

Registration

High-Risk AI systems must be registered in the EU database before deployment. The registration requirement creates a public record of the system's existence, purpose, and compliance status.

High-Risk AI compliance is not a single document. It is a programme of ongoing governance, documentation, and oversight that must be embedded into the organisation's AI development and deployment processes. It requires dedicated capability that most organisations do not yet have.

The UK Position: What Post-Brexit Organisations Need to Know

The EU AI Act does not directly apply as UK domestic law following Brexit. However, UK organisations are not exempt from its obligations.

Any UK organisation that provides AI systems to EU market users, or deploys AI systems that produce outputs affecting EU citizens or EU-based businesses, is subject to the Act by virtue of its extraterritorial scope. The mechanism mirrors GDPR: the regulation applies based on where the data subject or system user is located, not where the AI provider is headquartered.

The UK Government has taken a different regulatory approach, favouring a sector-led, principle-based framework rather than comprehensive legislation. However, the practical reality for most UK technology companies and businesses with EU market exposure is that EU AI Act compliance is unavoidable.

UK organisations that serve EU markets need to comply with the EU AI Act regardless of the UK's domestic regulatory position. The choice to ignore it because "it is EU law" is not available to organisations whose AI systems touch EU citizens or EU-based businesses.

Ready to go deeper?

Professional Training

Hands-on, mentor-led training aligned with industry certifications.

About the Author

Sharper every day

Daily tutorials, analysis, and career playbooks across all 12 Xcademia disciplines, straight to your inbox. No spam.